The Physical AI Supercycle: Following the Money in the Robotics Buildout

By Alex - February 27, 2026

If you look at the evolution of the artificial intelligence boom, it has followed a very predictable, three-stage roadmap.

Stage 1 (2023-2024): The Screen. This was the era of text and pixels. ChatGPT, Midjourney, and LLMs. The AI was trapped in your browser.

Stage 2 (2024-2025): The Enterprise Agent. The AI learned to use software. Instead of just answering questions, AI “agents” started autonomously booking flights, writing code, and managing corporate supply chains.

Stage 3 (2026 and beyond): The Physical World. The AI gets a body.

We are officially leaving the R&D phase of robotics and entering commercial deployment. The transition from Large Language Models (LLMs) to Large Action Models (LAMs) is expanding profit margins on factory floors right now.

But if you want to invest in this supercycle safely, you can’t just buy a basket of speculative “robot tickers” based on a slick YouTube video. You need to understand the underlying physics and the silicon architecture. You have to think like an engineer.

Here is a deep-dive technical breakdown of the three layers of the Physical AI stack, supported by what CEOs across the industrial landscape are actually saying on their recent earnings calls.

Where Are We in the Trend? (The “Digital Twin” Phase)

To understand where we are, you have to understand how modern robots are built.

Traditional industrial robots are “dumb.” They are hard-coded to do one specific motion (like welding a car door) exactly the same way, a million times in a row. If a part is half an inch out of place, the robot gets confused, the assembly line stops, and alarms go off.

Physical AI changes everything. Thanks to spatial computing and visual AI, the new generation of robots can reason. If a screw is out of place, the robot uses its cameras to see the problem, calculates a new trajectory, reaches out, and fixes it dynamically.

But these robots aren’t trained in the real world—they are trained in the “Matrix.”

Companies use high-end graphics engines to create massive “Digital Twins” of their physical factories. Millions of virtual robots run simulations inside this digital world, learning how to walk, grab, and assemble at 10,000x normal speed. Once the AI brain is perfected in the simulation, it is simply downloaded into the physical metal body.

We are currently in the heavy infrastructure phase of this trend. The mass consumer rollout of humanoids (like a robot folding your laundry at home) is still years away. The investable reality today is industrial automation, supply chain logistics, and the silicon required to train them.

The Engineer’s View: Why This Time is Different

For decades, robotics relied on Deterministic State Machines and complex Inverse Kinematics. If you wanted a robot arm to pick up an apple, an engineer had to write thousands of lines of code mathematically calculating the exact angles of every joint (shoulder, elbow, wrist) required to reach X/Y/Z coordinates. If the apple moved one inch, the math failed, and the robot grabbed empty air.

Physical AI shifts this to an “End-to-End Neural Network” approach. It’s called “Pixels to Torque.” The robot’s cameras take in visual data (pixels), feed it into a Transformer model, and the AI directly outputs the electrical current (torque) needed for the motors to move. The robot isn’t following a hard-coded path; it is reasoning about its environment in real-time.

To make this happen, capital is flooding into three specific layers.

Layer 1: The Brains (Foundation Models & Edge Compute)

This layer provides the “operating system” and the localized silicon that allows the robot to think without lagging.

The Technical Bottleneck: You cannot run a factory robot entirely on the cloud. If a robot drops a glass, it cannot afford the 50-millisecond latency roundtrip to ping an AWS server in Virginia to figure out how to catch it. It requires massive, zero-latency Edge Inference directly on the metal, while constrained by battery power and heat dissipation.

The Tech: System-on-Chips (SoCs) with dedicated Neural Processing Units (NPUs). This allows the robot to process stereoscopic vision locally at extremely high TOPS/watt (Tera Operations Per Second per Watt).

The Key Players:

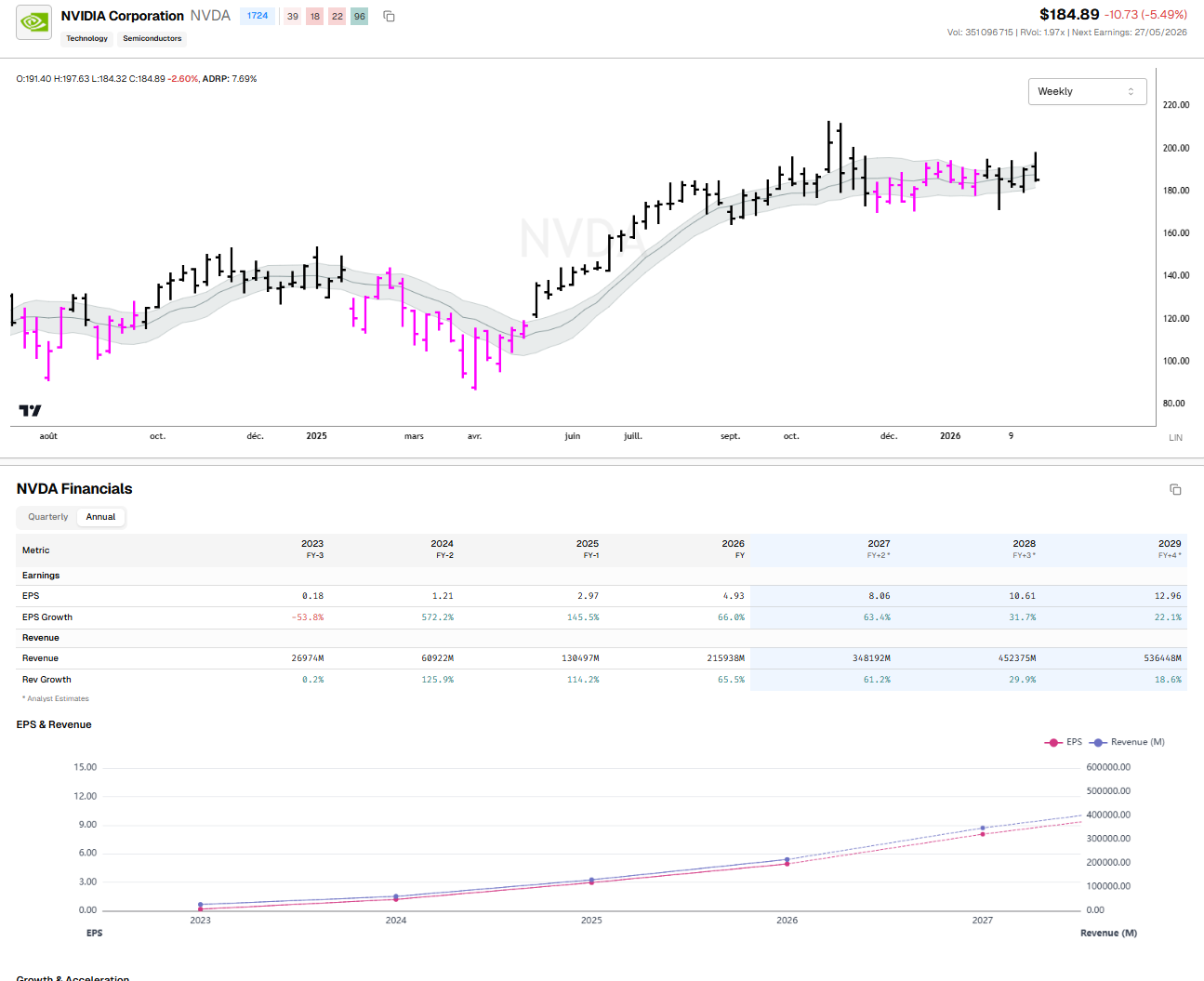

NVIDIA (NVDA): Their Isaac GR00T foundation model provides the underlying architecture, while their Jetson Thor computing platform acts as the onboard brain.

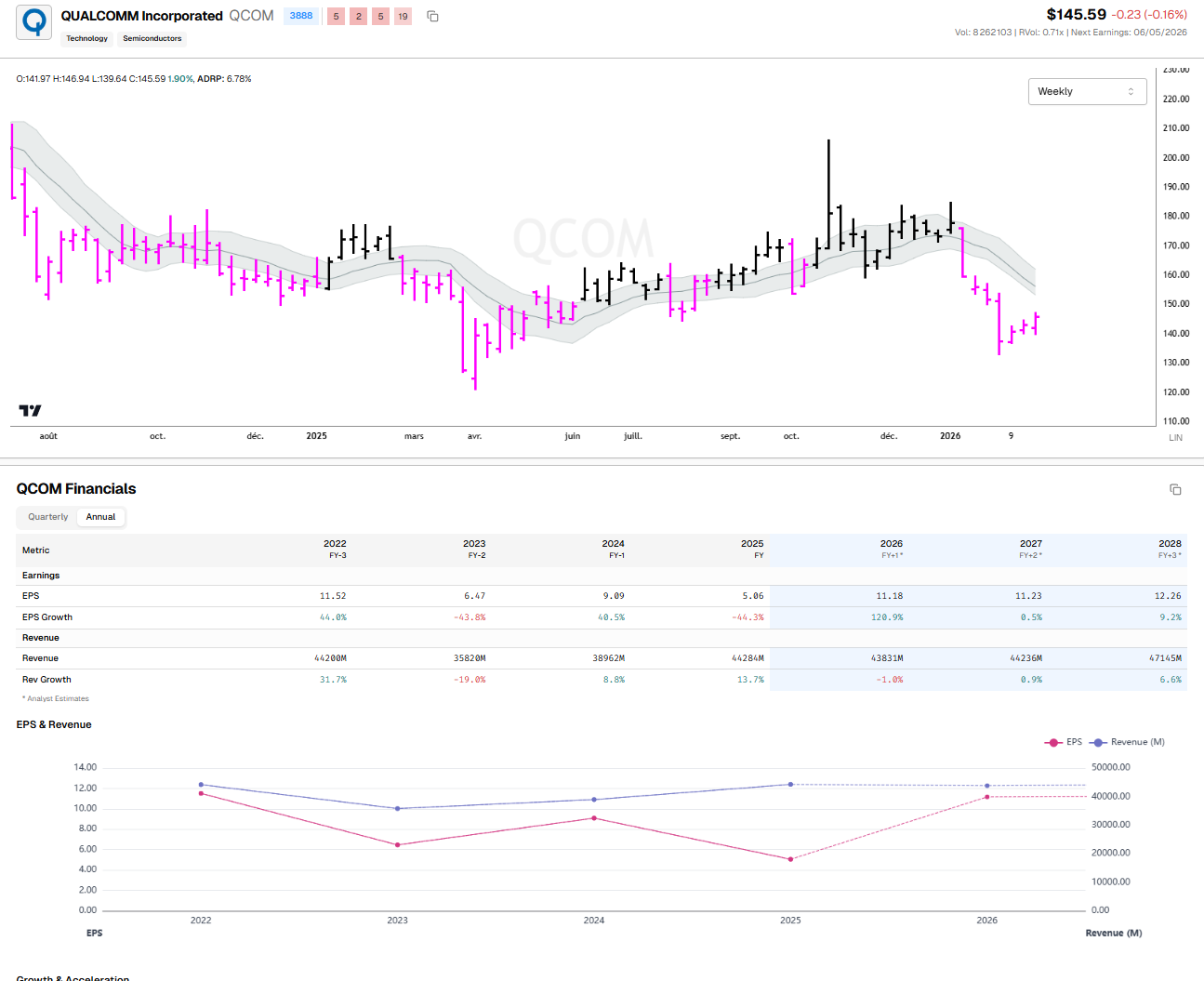

Qualcomm (QCOM): Dominates the low-power edge compute space. Their robotic architectures process multiple 4K camera feeds concurrently while drawing less power than a lightbulb.

Layer 2: The Bodies (Humanoids, AMRs, and Cobots)

This is the OEM layer—the companies bending the metal and deploying the fleets.

The Technical Bottleneck: Safety and payload. Traditional industrial robots are blind, heavy, and will crush a human if they get in the way.

The Tech: Collaborative Robots (Cobots) and Autonomous Mobile Robots (AMRs). These use integrated torque sensors at every joint. Instead of rigidly following a path, if the robot’s arm detects a sudden spike in resistance (e.g., bumping into a human), the motor controller cuts the power in milliseconds. Furthermore, AMRs use SLAM (Simultaneous Localization and Mapping)—fusing LiDAR and visual odometry to build 3D maps of dynamic warehouses on the fly.

The Key Players:

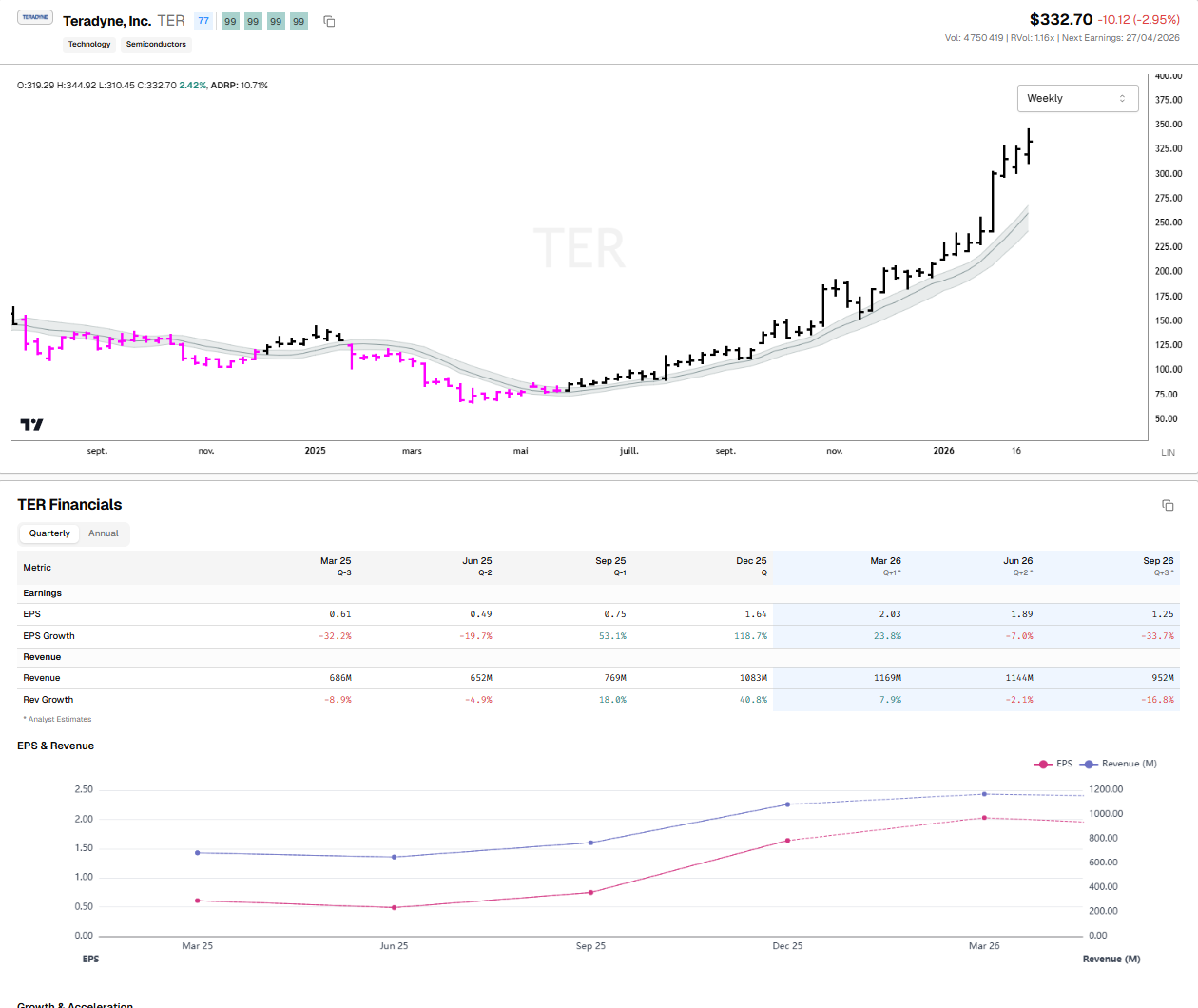

Teradyne (TER): Owners of Universal Robots, the kings of the 6-axis cobot arm.

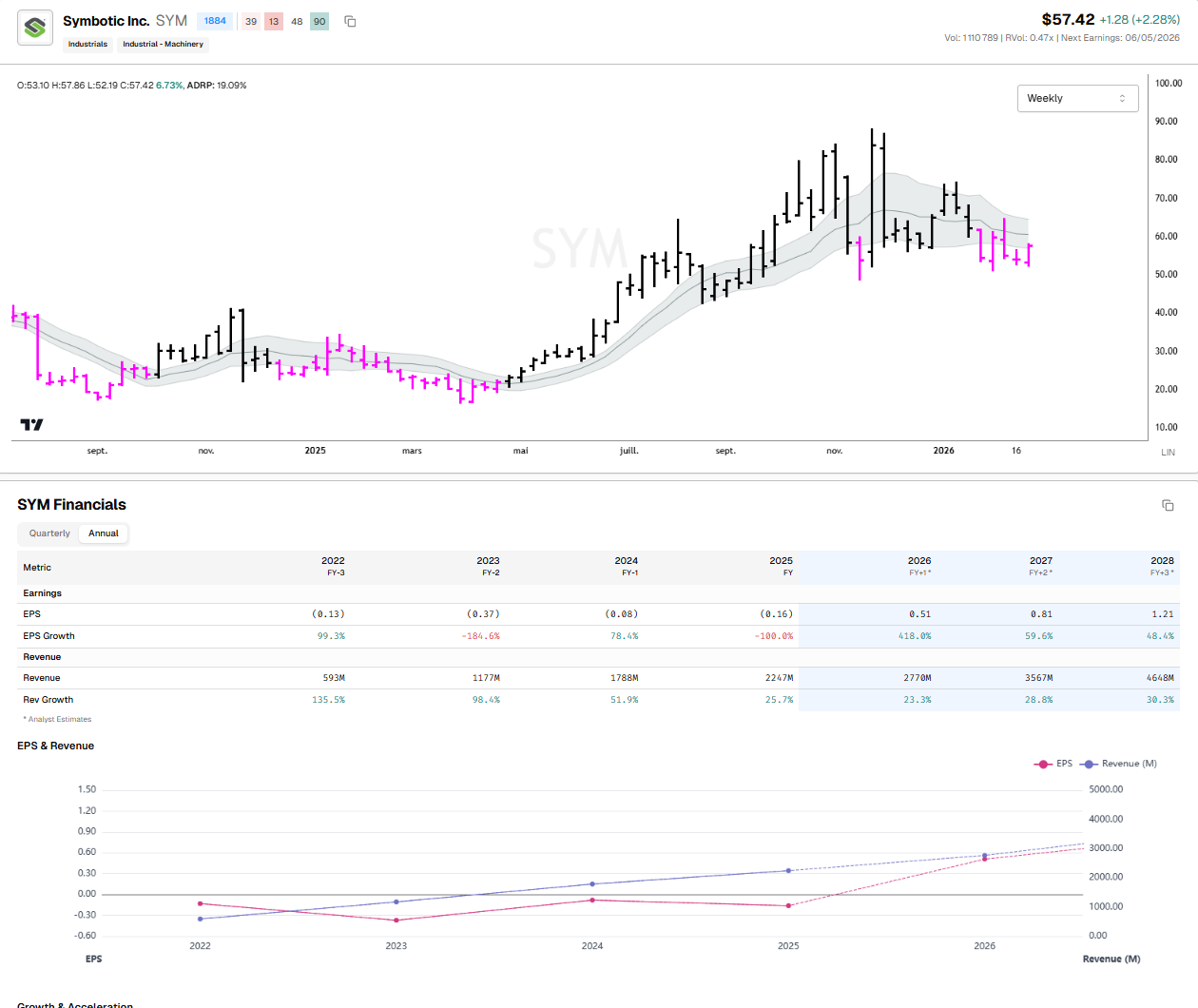

Symbotic (SYM): Pioneers in warehouse AMRs. They build massive “hive-mind” robotic fleets that roam 3D grid structures inside Walmart and Target distribution centers.

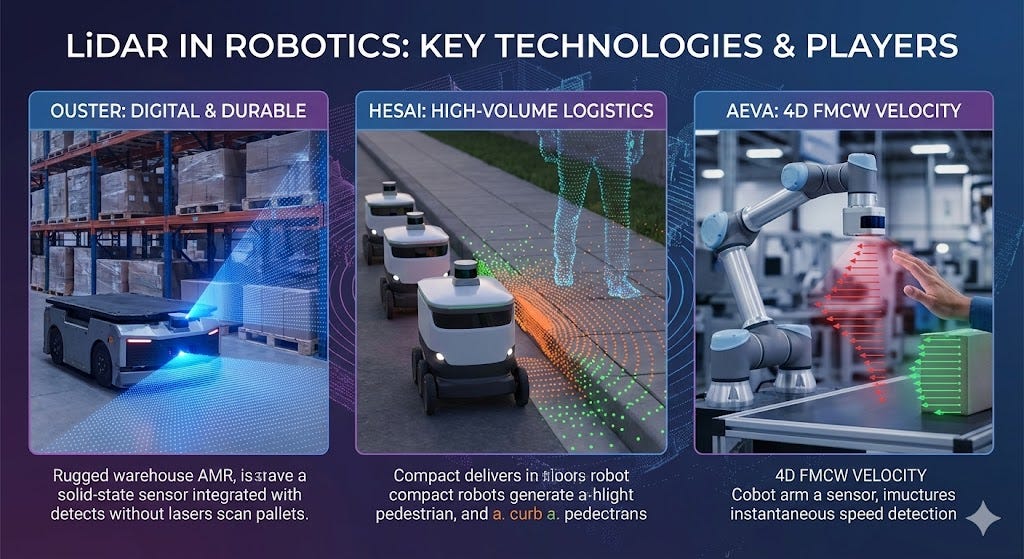

Understanding LiDAR Technology in Robotics

LiDAR (Light Detection and Ranging) is the foundational sensory technology allowing Collaborative Robots (Cobots) and Autonomous Mobile Robots (AMRs) to navigate dynamic, unpredictable environments. Unlike traditional 2D cameras that struggle with depth perception and lighting changes, LiDAR works by emitting thousands of invisible laser pulses per second and measuring the exact time it takes for the light to bounce back from surrounding objects. This “Time-of-Flight” data is instantly processed to generate a high-resolution, 3D point cloud of the robot’s surroundings. When integrated with SLAM (Simultaneous Localization and Mapping) software, LiDAR allows an AMR to continuously map a warehouse on the fly, instantly detecting moving forklifts, dropped pallets, or human workers, and recalculating its path in milliseconds.

Ouster, Inc. (NYSE: OUST): The Digital LiDAR Pioneer

Ouster has carved out a dominant position in the robotics and industrial automation space by commercializing “digital LiDAR.” Historically, LiDAR sensors relied on fragile, spinning mechanical lasers to scan environments, which were prone to breaking down under the heavy vibrations of industrial use. Ouster replaced these moving parts with custom semiconductor chips, creating solid-state, highly durable sensors. Following their merger with Velodyne (the original creator of 3D LiDAR), Ouster strategically pivoted away from the hyper-competitive passenger car market to focus almost exclusively on AMRs, smart infrastructure, and automated material handling. Their sensors are designed to provide the wide field-of-view required by warehouse robots to monitor their immediate surroundings and avoid collisions.

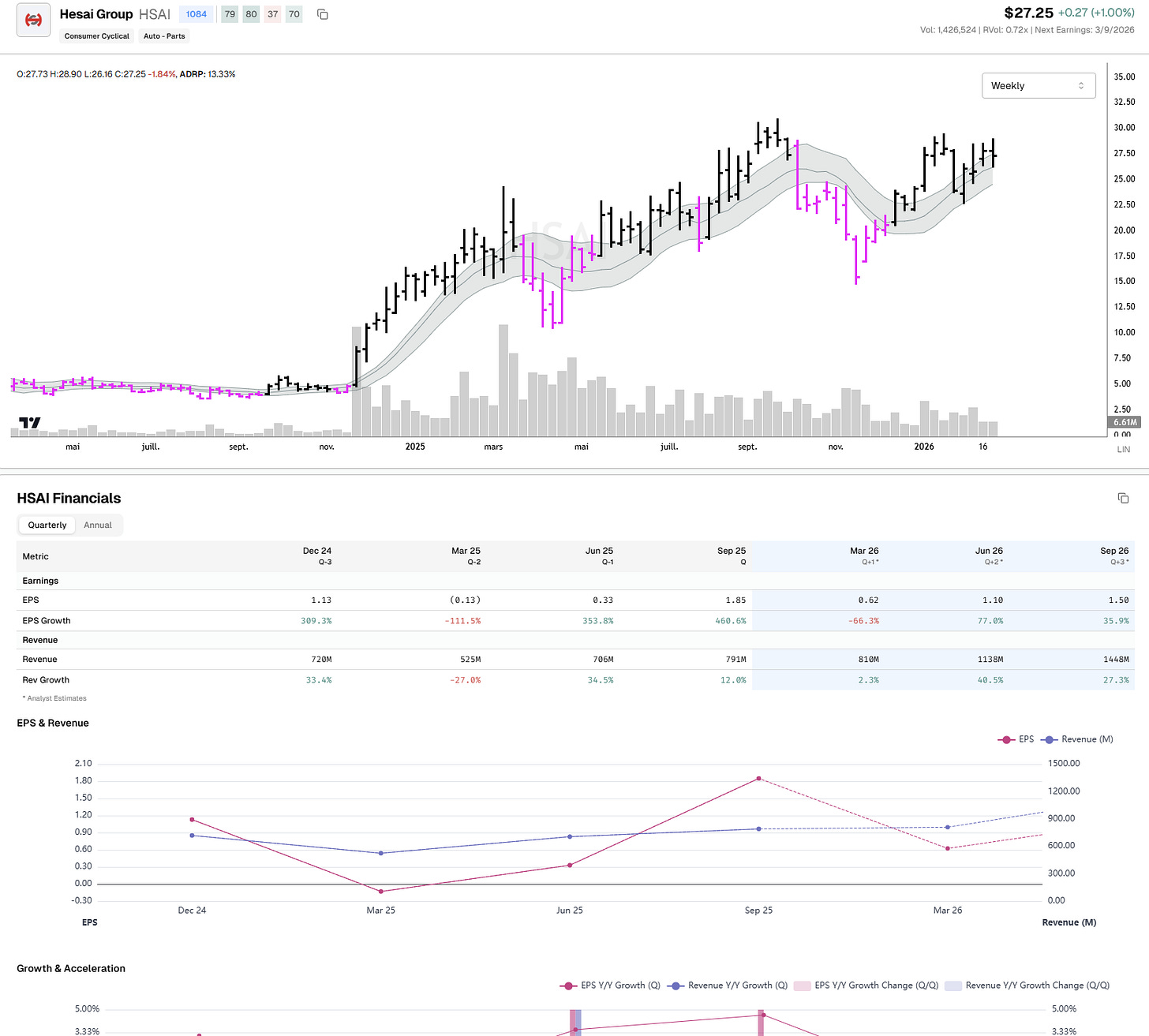

Hesai Group (NASDAQ: HSAI): The High-Volume Manufacturer

Hesai is one of the undisputed global leaders in LiDAR by manufacturing volume. While they are highly visible in the automotive robotaxi sector, they maintain a massive footprint in the logistics and delivery robotics market. Hesai’s core advantage is its deep vertical integration and in-house manufacturing capabilities, which enable it to produce reliable, high-resolution sensors at a price point that makes them economically viable for companies deploying fleets of thousands of AMRs. They offer a diverse portfolio of sensors, including ultra-wide blind-spot LiDARs that are specifically mounted lower on delivery robots and AMRs to detect small obstacles and human feet that primary top-mounted sensors might miss.

Aeva Technologies (NYSE: AEVA): The 4D FMCW Innovator

Aeva represents the next generation of spatial perception, differing fundamentally from the standard “Time-of-Flight” approach used by Ouster and Hesai. Aeva specializes in 4D FMCW (Frequency Modulated Continuous Wave) LiDAR. Instead of just measuring the distance to an object, FMCW technology instantly measures the exact velocity of every single pixel it scans. For Cobots working in close proximity to human workers, this is a critical safety upgrade. If a human unexpectedly reaches toward a moving robot arm, the Aeva sensor doesn’t just register that an object is there; it immediately knows exactly how fast the hand is moving toward the machine, allowing the motor controllers to trigger an emergency stop fractions of a second faster than traditional systems.

Layer 3: The Bottlenecks (Actuators & Sensors)

This is the pure “picks and shovels” layer. These are the physical components that every robot needs, regardless of who builds it.

The Technical Bottleneck: Gear backlash and spatial awareness. Standard gears have “play” or “backlash” between the teeth. Over a 3-foot robotic arm, a 1-millimeter gap at the shoulder gear translates to a 2-inch error at the hand.

The Tech: Strain Wave Gearing. This is a brilliant mechanical engineering design utilizing a flexible metal cup (flexspline) that deforms inside a circular ring. Because the teeth are always engaged at multiple points simultaneously, there is zero backlash. It allows for massive gear reduction (high torque) in a tiny footprint.

The Key Players:

Harmonic Drive (HSYDF): The undisputed global monopoly on strain wave gears. If a humanoid joint moves smoothly, Harmonic Drive is almost certainly inside it.

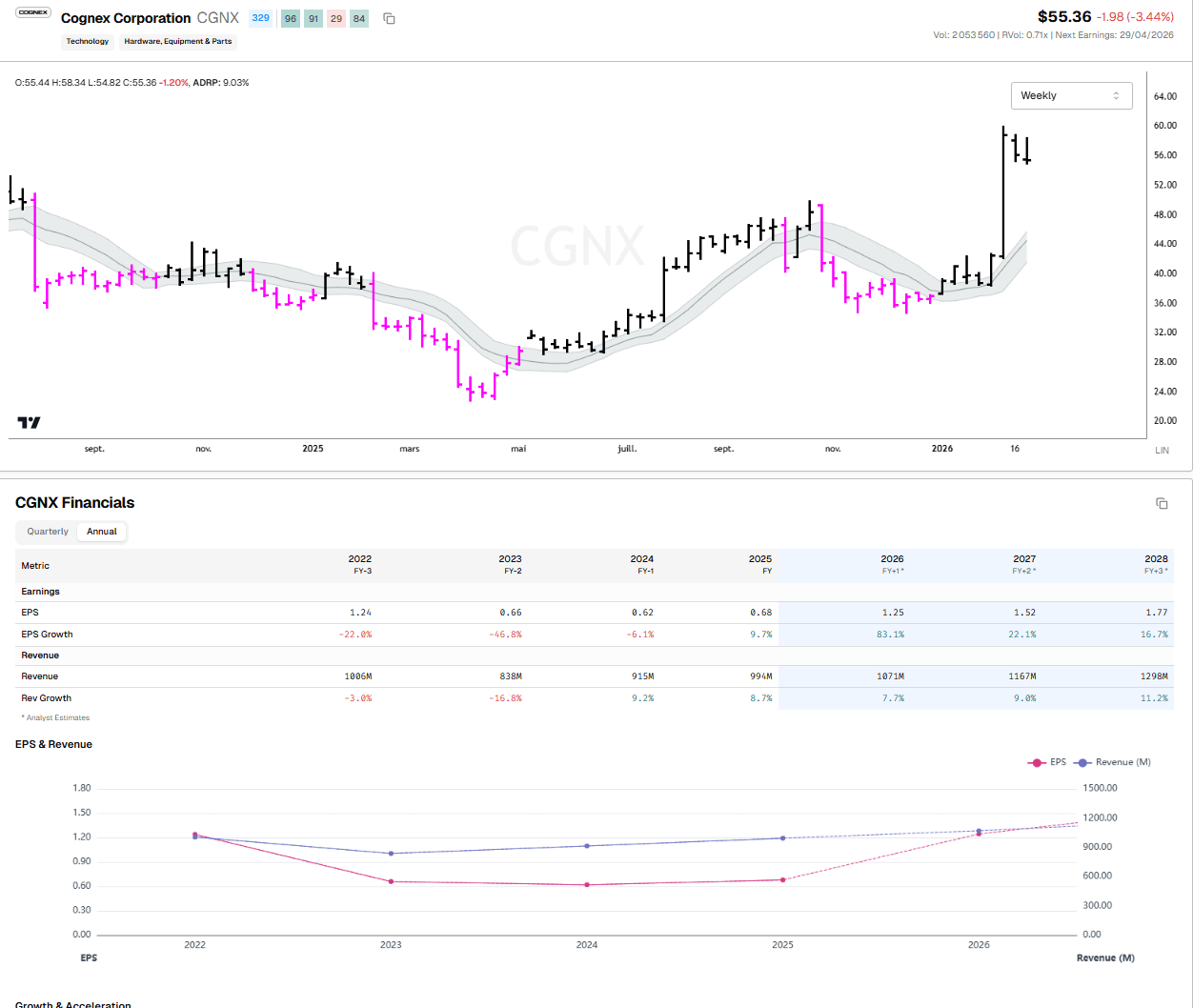

Cognex (CGNX): The leader in Machine Vision. They provide the industrial-grade 3D Time-of-Flight (ToF) sensors and barcode reading tech that act as the robot’s optic nerve.

The Proof is in the Transcripts: Recent Earnings Tells

If you want to know if a trend is real, ignore the product launch videos and listen to what industrial CEOs are telling Wall Street analysts. The deployment phase is already showing up in the cash flows.

1. NVIDIA (Q4 2026 Call - Feb 25th)

Yesterday, Jensen Huang told Wall Street point-blank: “The ChatGPT moment for robotics has arrived.” But the real fundamental tell was in their revenue breakdown. NVIDIA’s “Professional Visualization” segment—which houses the Omniverse platform used to build the Digital Twins mentioned above—just reported a record $1.3 billion in revenue, up a staggering 159% year-over-year. This proves that the world’s largest manufacturers are currently buying the software compute to simulate their future robotic workforce.

2. Teradyne (Q4 2025 Call - Feb 3rd)

Teradyne (TER) is the blue-chip leader in “cobots” (collaborative robots that work safely alongside humans). During their call earlier this month, CEO Greg Smith noted that AI-related demand represented over 60% of their Q4 revenue.

More importantly, he dropped a massive hint about their Robotics segment, noting they are targeting breakeven in 2026 supported by a massive e-commerce deployment that they expect to “triple-ish” from 2025 to 2026. Hint: When a robotics CEO talks about a massive e-commerce deployment tripling in size, they are almost certainly talking about an Amazon-scale logistics rollout.

The Quote: “Physical AI is already expanding the applications of advanced robotics, and we believe that trend will continue to strengthen.” — Greg Smith, Teradyne CEO

3. Rockwell Automation (Q1 2026 Call - Feb 19th)

Rockwell (ROK) provides the overarching automation systems that connect robots and conveyor belts together. Last week, they delivered a massive earnings beat, with revenue up 12% to $2.11 billion.

The fundamental shift here is software. Rockwell’s “Software and Control” segment saw margins explode to over 31%. At the Citi Global Industrial Conference just a few days ago, CEO Blake Moret highlighted that their recent entry into mobile robots has expanded their total addressable market by $4 to $5 billion. Furthermore, they reported a 30% improvement in labor efficiency at their own smart plant in Singapore, proving the ROI of their own tech.

4. Symbotic (SYM) - Warehouse Automation Symbotic builds autonomous robotic fleets for massive distribution centers. In their latest earnings call, management highlighted that they are actively deploying AI-driven vision systems that allow their robots to handle mixed-case pallets without human intervention.

“Our AI-enabled robotic systems are not just replacing legacy infrastructure; they are fundamentally rewriting the unit economics of the supply chain.”

5. ABB (ABB) - Global Industrial Robotics ABB is a Swiss industrial giant. In their recent quarterly update, they explicitly called out a resurgence in robotics orders driven by Western “reshoring.”

“Customers are facing an acute structural labor shortage in manufacturing. The demand for our AI-enabled collaborative robotics segment is accelerating faster than traditional heavy automation as factories demand flexible, easily reprogrammable solutions.”

6. Cognex (CGNX) - Machine Vision Cognex acts as the “eyes” for factory automation. Their recent call confirmed that the logistics and automotive sectors are aggressively upgrading their vision systems.

“The integration of edge AI into our 3D vision systems has moved from pilot programs to full-scale rollouts. Customers require systems that don’t just ‘see’ a defect, but use neural networks to categorize and adapt to anomalies on the fly.”

7. Zebra Technologies (ZBRA) - Enterprise Visibility Zebra is a massive player in warehouse tracking and Autonomous Mobile Robots (AMRs) via their Fetch Robotics acquisition. Their latest commentary pointed to a massive upgrade cycle.

“We are seeing strong momentum in our robotics automation portfolio. The shift from fixed conveyor systems to dynamic AMR fleets is a priority for our enterprise retail and logistics customers as they seek to optimize labor costs.”

8. Fanuc (FANUY) - CNC and Robotics Heavyweight The Japanese automation titan controls the heavy industrial robotics market. While historically relying on traditional programming, they recently highlighted their shift toward AI.

“We are heavily investing in AI integration across our entire robotics portfolio. The ability for our automated systems to self-optimize and perform preventative maintenance using machine learning is becoming a primary purchasing criteria for our largest automotive clients.”

The Bottom Line

The integration of AI into physical robotics has moved past the conceptual R&D stage and is now a measurable capex trend within the industrial and logistics sectors.

Rather than treating “robotics” as a single thematic bucket, investors should view the sector through the lens of its underlying technology stack. By focusing on the critical hardware and compute requirements—from edge-inference NPUs to high-precision strain wave actuators—you can effectively separate companies with durable engineering advantages from those driven primarily by broader AI market sentiment.

PrimeTrading is an equity trading community for learning & trading alongside experienced traders. It’s like sneaking into a trader’s POD, where you can see us execute & discuss...but in ours, you can also interact and ask questions.

I would have killed for such an opportunity when I started trading! 🔥

Education & mentoring from Alex & experienced traders. (10 Experienced Traders team)

See how I execute intraday while I share all my trades with explanations live.

Alex’s daily market commentary, Portfolio updates, trade explanations, and daily FocusList.

Share & Discuss potential trade ideas with an amazing, like-minded community.

Talk Sectors, Macro Economy, Cryptocurrencies & much more!

Talk about mental game & psychology to evolve as a trader!

Live trading and Q&A sessions every morning.

Trade & learn with us, from Novice to Expert!

Best,

Alex 🛡️✌️